So KinectFusion is an awesome new Microsoft algorithm for the GPU that essentially does real-time SLAM with Kinect RGBD cameras. They didn’t publish the source, but the WillowGarage PCL and OpenCV guys have been busy implementing it from the paper. And I must say, the beta versions so far in the PCL svn are quite impressive. It acts about the same as what you see in the Microsoft demo videos. If you like games check this crossword generator, it runs perfect On my GTX 470 it runs at 20 Hz. It does seem to loose track fairly easily and has to reset, especially with large camera movements. Plus because it is using a dense voxel representation on the GPU, the max size is 512x512x512, which covers only a small room with reasonable resolution. This isn’t great, but I imagine that issues such as these could be fixed with better global matching (for instance color ICP) or a paging system that seamlessly transfers parts of the voxel representation between the CPU and GPU so you can model larger areas. I spent all day Saturday playing around with friends on KinectFusion and trying to use GPU-accelerated SURF matching to enforce a better global localization, but without a lot of success. We also captured some cool 3D models to voxel files. During this process, we found a bug in their saving function. Binary files were being written without being opened as binary, which turned all ‘\n’ into ‘\r\n’ on Windows. Today I submitted a patch, which I guess makes me an open-source contributor to PCL. Woohoo!

Even though I was forced to get up unreasonably early for an advisor meeting, today went remarkably well. I discovered the deadline for BioRob 2012 had been pushed back from Jan 15th to Jan 31st, which gives me a huge breather. That greatly reduces (or at least postpones) the slew of all-nighters I would have to pull next week. My advisor meeting went well (i.e. I didn’t get yelled at :)) too. I spent the afternoon compiling OpenCV and PCL to try out the new KinectFusion. I was able to get the sample code for dense stereo working in OpenCV on the GPU which should be very handy. Finally, my coauthor and I got our journal paper down to 14 pages and are pretty much ready to submit. A few more proofs, final preparations, a few comments from friends willing to look at it, and bam we should be good to go! All in all, a very busy, yet rewarding day.

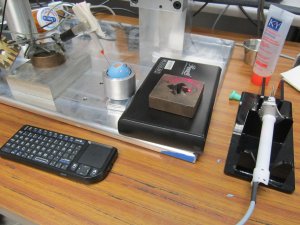

Today was “get back to the salt mines and get your shovel rusty” day. Our next set of experiments with our lab’s surgical robot Micron (white handled gizmo on the right in the black plexiglass holder) is designed for retinal procedures. To begin more realistic evaluation, I am trying to create a fake, or phantom, eye for which we can do tests. Luckily, we have these blue rubber eye-ball phantoms from JHU so mostly I just have to adapt the setup for our needs. Since retinal surgeries create little holes, or ports, in the side of the eye to stick tools through to get back to the retina on the back of the eye, I spent my day carving holes in blue rubber and trying to fit small 3 mm tubes to form a trocar. I mostly succeeded by dinner time and went to pick a friend up at the airport and eat at Cracker Barrel, which was nice. Upon returning, I discovered that our tool didn’t work very well at all, possibly because the rubber eye couldn’t rotate very well in my metal spherical holder. KY lubricant to the rescue! A dab in the bottom and bam, suddenly the eye could move around as easily as you looking right and left. Re-running a few preliminary tests showed some visual improvement with our surgical robot so I’m happy. Hopefully my advisor will be happy too at our insanely early meeting tomorrow. I called it quits early around midnight and headed home to write some of my thesis (and write this blog while I try to prevent myself from getting fat by riding an exercise bike). It’s all about multi-tasking – oh and not having a life 😀

Today was “get back to the salt mines and get your shovel rusty” day. Our next set of experiments with our lab’s surgical robot Micron (white handled gizmo on the right in the black plexiglass holder) is designed for retinal procedures. To begin more realistic evaluation, I am trying to create a fake, or phantom, eye for which we can do tests. Luckily, we have these blue rubber eye-ball phantoms from JHU so mostly I just have to adapt the setup for our needs. Since retinal surgeries create little holes, or ports, in the side of the eye to stick tools through to get back to the retina on the back of the eye, I spent my day carving holes in blue rubber and trying to fit small 3 mm tubes to form a trocar. I mostly succeeded by dinner time and went to pick a friend up at the airport and eat at Cracker Barrel, which was nice. Upon returning, I discovered that our tool didn’t work very well at all, possibly because the rubber eye couldn’t rotate very well in my metal spherical holder. KY lubricant to the rescue! A dab in the bottom and bam, suddenly the eye could move around as easily as you looking right and left. Re-running a few preliminary tests showed some visual improvement with our surgical robot so I’m happy. Hopefully my advisor will be happy too at our insanely early meeting tomorrow. I called it quits early around midnight and headed home to write some of my thesis (and write this blog while I try to prevent myself from getting fat by riding an exercise bike). It’s all about multi-tasking – oh and not having a life 😀

Well it’s back to the salt mines: this morning I departed from Orlando (left) and arrived at Pittsburgh (right). It is times like these that I wonder why I chose CMU. Then I remember the awesome robotics I get to do and I’m happy again. In other news, I finished up my part of the journal (14.5 pages) and handed it off to my partner in crime. Then I went on an airport and Steak ‘n’ Shake run. Oh and at T-112 days, I started my thesis!

Well it’s back to the salt mines: this morning I departed from Orlando (left) and arrived at Pittsburgh (right). It is times like these that I wonder why I chose CMU. Then I remember the awesome robotics I get to do and I’m happy again. In other news, I finished up my part of the journal (14.5 pages) and handed it off to my partner in crime. Then I went on an airport and Steak ‘n’ Shake run. Oh and at T-112 days, I started my thesis!

This post details my experiences with Intel’s hardware encoding Quick Sync functionality that is part of their new processors. I’ve spent the past three days struggling with the Intel Media SDK to implement encoding of video streams to H.264 and wanted to (a) document the experience and (b) provide some information that I’ve pieced together from various places to anybody else who might be interested in playing around with Intel’s latest and greatest.

The Need

When writing computer vision applications that process fairly high-resolution imagery in real-time, significant amounts of CPU are used and lots of data is generated. For my application in surgical robotics, I have two stereo cameras running at 1024 x 768 @ 30 Hz = 135 M/s in full RGB video, in addition to any supplementary data generated by the system. This is a lot of data to process, but worse yet: what do you do with the data once you’ve processed it? Saving results isn’t very easy because your options are pretty much limited to the following:

- Save encoded video to disk, but this requires lots of CPU time which you are probably using for the vision system

- Resizing the video is an option, but it is uncool(TM) to save out lower resolution videos

- Save the raw video to disk, but you need a very fast disk or RAID array

- Save the raw video to RAM, but that gets expensive and requires a follow-up encoding phase

- Offshore the video to another computer/GPU, but this is tricky and can require a high-speed bus (i.e.e quad gigabit or an extra PCI-e)

- 3rd party solutions, such as hardware encoder + backup solution, but this is generally pretty pricy and finicky to setup

The Solution

Luckily for us, Intel has provided a brand new and almost free solution to this problem of saving lots of video data very fast: Quick Sync (wiki), a hardware encoder/decoder built directly into the latest SandyBridge x86 chips.What does this give you? The ability to encode 1080p to H.264 at 100 fps with only a negligible increase in CPU. I say almost free because while the functionality is free and built directly into the latest Intel processors ($320), there is some amount of work in getting the free Intel Media SDK working.

The rest of this post is going to detail my experiences over the past three days in getting Quick Sync to encode raw RGB frames (from OpenCV) to H.264 video.

Caveats/Limitations

First, it is important to know that there are some limitations with the hardware encoding:

- You need a processor that supports Intel Quick Sync and a H67 or Z68 motherboard (at the time of writing) to enable the integrated graphics section of the chip which contains the hardware encoders/encoders.

- The integrated graphics must be plugged into a monitor/display and be actively on and showing something (ebay a super cheap small LCD if you have to).

- Only a limited number of codecs are available: H.264, VC-1, and MPEG-2.

- Additionally, a maximum resolution of 1920×1200 is available for hardware encoding. I believe you can work around this issue by encoding multiple sessions simultaneously, although it looks like the code gets more complicated. I have tested running two applications that are both encoding at the same time without any difficulties so I know that works at least.

- Finally, documentation is somewhat scarce and the limited number of posts on the Intel Media SDK forum are probably your only bet for finding answers to any issues.

Setup

My current setup is an Intel i7-2600K processor (not overclocked) on a Z68 motherboard with an GTX 460 powering two monitors and a projector. I have Visual Studio 2010 Ultimate installed for C/C++ development. Originally I had the projector plugged into the integrated graphics, but it seems that didn’t work (i.e. QuickSync initialization would fail). I removed the graphics card and plugged in one of my monitors to the integrated graphics and QuickSync initialized fine, so I put the GTX 460 back in and plugged it to the other monitor and projector. Things were still happy with Quick Sync so I imagine that the integrated graphics must be not only plugged in, but actively displaying something.

To get started, Windows 7 x64 had already installed Intel’s HD Graphics drivers, so I just installed the Intel Media SDK 3.0. It comes with a reference manual, a bunch of sample in C++, and two approaches to using Quick Sync:

- Via the API: This is the official, supported way with the most amount of flexibility. Unfortunately, only elementary streams are supported, meaning the outputted files are literally the raw bitstream and cannot be played with VLC or other media players.

- Via DirectShow filters: For quick & dirty simple approaches, the SDK comes with some sample DirectShow filters for encoding/decoding. Requesting help on the forums regarding the DirectShow filters seems to always prompt a response along the lines of “well, our DirectShow filters are really just samples and aren’t really supported or well tested…” which doesn’t leave me with warm fuzzies.

The reason I’m mentioning these approaches is because when I first started out, I didn’t know any of this and just launched into approach #1 by looking at the sample_encode example provided with the SDK. Had I know the limitations of #1 and the possibility of #2, I would have probably tried going down the DirectShow route instead. I might look into #2 later, but #1 seems to be working fine for me at the moment.

Verifying Quick Sync Works

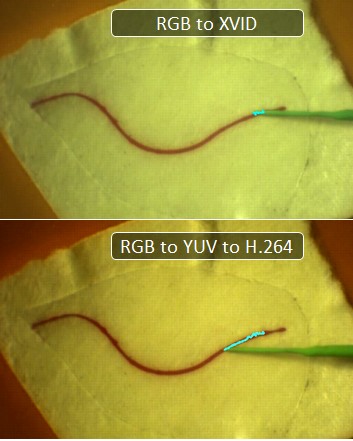

I discovered the simplest way to verify Quick Sync is working is to use GraphEdit to create a simple transcoding filter (no coding necessary). The process is described in this Intel blog post : simply download GraphEdit, then go to Graph -> Insert Filters. Select DirectShow Filters and insert “File Source (Async.)” (select a file playable with Windows Media Player) -> “AVI Splitter”, “AVI Decompressor” -> “Intel Media SDK H.264 Encoder”, “Intel Media SDK MP4 Muxer” and finally a “File Writer” (provide it with a name to save the file). Then you can run the graph and it should transcode the file for you. In my case, it converted a small ~1 minute XVID video to an H.264 video in just a few seconds. Coolnesses!

Adapting sample_encode

After reading some of the introductory material in the reference manual, browsing the sample code is usually how I best start learning something. The sample code that looked most promising was sample_encode. Unfortunately, the input to the program is a raw YUV video file (that then get shoved through the decoder and out to a *.h264 file), but who has those lying around? So I figured the best place to start was to connect that code to my program that outputs processed video frames for saving. The processing happens in OpenCV, so essentially I wanted to bridge the gap between a cv::Mat class with raw RGB pixels to the sample_encode Quick Sync encoding/saving code. First, I added a queue, mutex, and done pointer to CEncodingPipeline::Run and replaced the call to CSmplYUVReader::LoadNextFrame with a wait for next available frame in queue, a memcpy, and a check to the done pointer to see if the incoming video stream had stopped (in which case make sure to set sts = MFX_ERR_MORE_DATA). Then in the input thread, I used cv::cvtColor to convert the image to YUV (or YCrCb as referred to in the documentation) and packed it into the YV12 format (or rather the NV12 which is the same except different byte ordering). After numerous false starts, this finally spit out a video file that looked good, until I noticed something fairly subtle: the colors were a bit off.

So apparently, among the million and one different ways to convert RGB to YUV, I got the wrong one. Well let’s go directly to the big guns: Quick Sync is an Intel product, so the Intel IPP routines should be the same right? I head over to the documentation for ippiRGBtoYUV and implement the algorithm from their docs. That works better, but the color is still slightly wrong and more saturated. So converting from RGB to YUV to feed into Quick Sync is a bust, although quite possibly there is a bug somewhere in my code.

RGB to H.264

OK, well, now what? If feeding YUV isn’t working, can we directly feed in RGB? The answer is sort of. Part of Quick Sync contains VPP or the hardware Video Pre-Processing pipeline. This is a set of filters, such as scaling, cropping, etc that you can run in hardware before the encoding step. One of the preprocessing steps is color conversion where we can convert RGB4 to NV12. RGB4 is really just RGBA where each pixel is represented by four bytes of red, green, blue, alpha. The trick is to get it to work. First, I convert OpenCV’s RGB to RGBA; that’s the easy part. In sample_encode, the trick to enabling their VPP code is to make sure that the input format vpp.In.FourCC is different than the output format vpp.Out.FourCC. So I set both in CEncodingPipeline::InitMfxVppParams:

m_mfxVppParams.vpp.In.FourCC = MFX_FOURCC_RGB4; // changed line

// in-between code, calculating frame rates, setting sizes and crops…

memcpy(&m_mfxVppParams.vpp.Out, &m_mfxVppParams.vpp.In, sizeof(mfxFrameInfo));

m_mfxVppParams.vpp.Out.FourCC = MFX_FOURCC_NV12; // added line

And boom, now I can memcpy my RGBA code into pSurf->Data.R and things magically work. You should set pSurf->Data.G =pSurf->Data.R+1 and pSurf->Data.B = pSurf->Data.R+2;

But what about AVIs?

So the one downside to this whole approach is that the API provided by the Intel Media SDK produce an elementary stream, not an MP4 or AVI. This is fine if you just want to get your video compressed and onto the disk, but somewhat disheartening when you want actually want to see that recorded video. There are, of course, video players that can decode elementary streams, including DGAVCDec and the Intel Media SDK’s sample_dshow_player. In fact, if you compile and run sample_dshow_player, it will actually use the DirectShow filters to not only load and play the elementary H.264 you’ve generated, but also transcode it to an AVI/MP4 file. Which is very nice, but still non-optimal. In the future, I’d like to check out the DirectShow route so I can automatically generate AVI files. Furthermore, it is possible even to mux in audio, which would be nice so I can record audio of the system instead of just video.

The End Result

So there we have it: video now comes in from the cameras, is processed by my vision system, sent to Quick Sync for encoding, and saved to disk. Encoding happens faster than I can shove frames in and barely increases my CPU load (there are some memcpy operations and various API calls to manage Quick Sync, and save the resulting stream to a file, but these are all relatively lightweight). I get an elementary H.264 stream out, which I will then batch convert using a modified version of the sample_dshow_player. It’s not completely optimal yet (I would prefer to output AVI/MP4 files), but for 3 days worth of futzing around with the Intel Media SDK, I think what I have works quite well for my needs.

Because my robot’s control system runs on a LabVIEW real-time machine, I have no recourse but to add new features in LabVIEW. Oh, I tried coding new stuff in C++ on another computer and streaming information via UDP over gigabit, but alas, additional latencies of just a few milliseconds are enough to make significant differences in performance when your control loop runs at 2 kHz. So I must code in LabVIEW as an inheritor of legacy code.

With a computer engineering background, I find that having to develop in LabVIEW fills me with dread. While I am sure LabVIEW is appropriate for something, I have yet to find it. Why is it so detestable? I thought you’d never ask.

If I get help from professional equipment engineers adelaide I might get a better result using the best quality materials.

- Wires! Everywhere! The paradigm of using wires instead of variables makes some sort of sense, except that for anything reasonably complex, you spend more time trying to arrange wires than you do actually coding. Worse, finding how data flows by tracing wires is tedious. Especially since you can’t click and highlight a wire to see where it goes – clicking only highlights the current line segment of the wire. And since most wires aren’t completely straight, you have to click through each line segment to trace a wire to the end. [edit: A commenter pointed out double clicking a wire highlights the entire wire, which helps with the tracing problem]

- Spatial Dependencies. In normal code, it doesn’t matter how far away your variables are. In fact, in C you must declare locals at the top of functions. In LabVIEW, you need to think ahead so that your data flows don’t look like a rat’s nest. Suddenly you need a variable from half a screen away? Sure you can wire it, but then that happens a few more times and BAM! suddenly your code is a mess of spaghetti.

- Verbosity of Mathematical Expressions. You thought low-level BLAS commands were annoying? Try LabVIEW. Matricies are a nightmare. Creating them, replacing elements, accessing elements, any sort of mathematical expression takes forever. One-liners in a reasonable language like MATLAB become a whole 800×800 pixel mess of blocks and wires.

- Inflexible Real-Estate. In normal text-based code, if you need to add another condition or another calculation or two somewhere, what do you do? That’s right, hit ENTER a few times and add your lines of code. In LabVIEW, if you need to add another calculation, you have to start hunting around for space to add it. If you don’t have space near the calculation, you can add it somewhere else but then suddenly you have wires going halfway across the screen and back joe fortune casino australia. So you need to program like it’s like the old-school days of BASIC where you label your lines 10, 20, 30 so you have space to go back and add 11 if you need another calculation. Can’t we all agree we left those days behind for a reason? [edit: A commenter has mentioned that holding Ctrl while drawing a box clears space]

- Unmanageable Scoping Blocks. You want to take something out of an if statement? That’s easy, just cut & paste. Oh wait no, if you do that, then all your wires disappear. I hope you remembered what they were all connected to. Now I’m not saying LabVIEW and the wire paradigm could actually handle this use case, but compare this to cut & paste of 3 lines of code from inside an if statement to outside. 3 seconds, if that compared to minutes of re-wiring.

- Unbearably Slow. Why is it when I bring up the search menu for Functions that LabVIEW 2010 will freeze for 5 seconds, then randomly shuffle around the windows, making me go back and hunt for the search box so I can search? I expect better on a quadcore machine with 8 gb of RAM. Likewise, compiles to the real-time target are 1-5 minute long operations. You say, “But C++ can take even longer” and this is true. However, C++ doesn’t make compiles blocking, so I can modify code or document code while it compiles. In LabVIEW, you get to sit there and stare at a modal progress bar.

- Breaks ALT-TAB. Unlike any other normal application, if you ALT-TAB to any window in LabVIEW, LabVIEW completely re-orders Windows Z-Buffer so that you can’t ALT-TAB back to the application you were just running. Instead, LabVIEW helpfully pushes all other LabVIEW windows to the foreground so if you have 5 subVIs open, you have to ALT-TAB 6 times just to get back to the other application you were at. This of course means that if you click on one LabVIEW window, LabVIEW will kindly bring all the other open LabVIEW windows to the foreground, even those on other monitors. This makes it a ponderous journey to swap between LabVIEW and any other open program because suddenly all 20 of your LabVIEW windows spring to life every time you click on.

- Limited Undo. Visual Studio has nearly unlimited undo. In fact, I once was able to undo nearly nearly 30 hours of work to see how the code evolved during a weekend. LabVIEW on the other hand, has incredibly poor undo handling. If a subVI runs at a high enough frequency, just displaying the front-panel is enough to cause misses in the real-time target. Why? I have no idea. Display should be much lower priority than something I set to ultra-high realtime priority, but alas LabVIEW will just totally slow down at mundane things like GUI updates. Thus, in order to test changes, subVIs that update at high frequencies must be closed prior to running any modifications. Of course, this erases the undo. So if you add in a modification, close the subVI, run it, discover it isn’t a good modification, you have to go back and remove it by hand. Or if you broke something, you have to go back and trace your modifications by hand.

- A Million Windows. Please, please, please for the love of my poor taskbar, can we not have each subVI open up two windows for the front/back panel? With 10 subVIs open, I can see maybe the first letter or two of each subVI. And I have no idea which one is the front panel and which is the back panel except by trial and error. The age of tabs was born, oh I don’t know, like 5-10 years ago? Can we get some tab love please?

- Local Variables. Sure you can create local variables inside a subVI, but these are horribly inefficient (copy by value) and the official documentation suggests you consider shift registers, which are variables associated with loops. So basically the suggested usage for local variables is to create a for loop that runs once, and then add shift registers to it. Really LabVIEW, really? That’s your advanced state-of-the-art programming?

- Copy & Paste . So you have a N x M matrix constant and want to import or export data. Unfortunately, copy and paste only works with single cells so have fun copying and pasting N*M individual numbers. Luckily if you want to export a matrix, you can copy the whole thing. So you copy the matrix, and go over to Excel and paste it in and……….suddenly you’ve got an image of the matrix. Tell me again how useful that is? Do you magically expect Excel to run OCR on your image of the matrix? Or how about this scenario: you’ve got a wire probed and it has 100+ elements. You’d like to get that data into MATLAB somehow to verify or visualize it. So you right click and do “Copy Data” and go back to MATLAB to paste it in. But there isn’t anything to paste! After 10 minutes of Googling and trial and error, it turns out that you have to right click and “Copy Data”, then open up a new VI, paste in the data, which shows up as a control, which you can then right-click and select “Export -> Export Data to Clipboard”. Seriously?!? And it doesn’t even work for complex representations, only the real part is copied! I think nearly every other program figured out how to copy and paste data in a reasonable manner, oh say, 15 years ago?

- Counter-Intuitive Parameters. Let’s say you want to modify the parameters to a subVI, i.e. add a new parameter. Easy right? Just go to the back panel with the code and tell it which variables you want passed in. Nope! You have to go to the front panel, right-click on the generic looking icon in the top right hand corner, and select Show Connector. Then you select one of those 6×6 pixel boxes (if you can click on one) and then the variable you want as a parameter. LabVIEW doesn’t exactly go out of its way to make common usage tasks easy to find.

Now granted, there are some nice things about LabVIEW. Automatic garbage collection [or rather the implicit instantiation and memory managing of the dataflow format, as one commenter pointed out], easy GUI elements for changing parameters and getting displays, and…………well I’m sure there are a few other things. But my point is, I am now reasonably proficient in LabVIEW basics and still have no idea how people manage to get things coded in LabVIEW without wanting to tear their hair out. There are people who love LabVIEW, and I wish I knew why, because then maybe I wouldn’t feel such horrorific frustration at having to develop in LabVIEW. I refuse to put it on my resume and will avoid any job that requires it. Coding in assembly language is more fun.

I have to review a couple of papers in the robotics field and was asking myself today: “What makes a good reviewer?” Let’s see. Ultimately, a reviewer serves two purposes: a judge and confidant. As a judge, a reviewer should objectively look at a body of research documented in a paper and check a number of important criteria:

- Does the research fit with the topic and tone of the place it is being published? Many research papers are good, but would be more appropriate if submitted to a different venue.

- Does the research meet the quality standards of the place it is being published? Is the research thorough and are the results representative of what you would expect?

- Does the paper present the research well? Is it clearly document the steps taken such that the results can be duplicated?

Of course, you could come up with many additional criteria by which to judge papers, and that is all well and good.

However, it is the second aspect to reviewing papers that I feel many people miss out on. A reviewer should be more than just an imperialist judge. In my opinion, what makes your ye-old standard reviewer into a good reviewer is the ability to act as a confidant. A confidant is somebody who you can share something important with and expect frank, but kindly advice. Your father, sibling, close friend. Somebody who will listen to you without condescension, frustration, or the ilk and really wish the best while advising you. Similarly, a researcher submitting their work for review is them sharing with you their not-yet published research. Just as a confident is somebody who is outside the situation and can offer advice, a reviewer should offer candid, yet kindly suggestions on how to make the research better. I have seen some reviewers who view their job as judge, jury, and executor in one, shredding good work and nitpicking small ideological issues. This is not to say that a reviewer shouldn’t be completely candid – sometimes the job of a confidant is to deliver unpleasant truth. They can note missing references, bring attention to errors, point out inconsistencies, indicate parts of the paper are unclear, make editorial corrections, etc. However, they should also offer insights that the authors might benefit from, suggest new avenues of future research, list more appropriate venues to publish, detail improvements that could be made, etc. And often it is not what you say, but how you say it.

The line between acting judge and confidant is hard to decide and act upon sometimes, but for the poor researcher slaving away at difficult problems for months and years on end, it is the least you can do. Put more than a quick scan and a few sentences into your reviews, and aim to be a good reviewer.

Sparse encoding and finding solutions of the form Ax=b with x subject to an l1 norm is a popular solution in signal reconstruction and now face recognition. Unlike the least-squares l2 norm which can be solved rapidly with SVD, finding the sparse l1 norm is a rather CPU expensive task. One of the fastest l1 solvers, GPSR still takes several seconds per image to process. If you profile the code, it turns out that 95% of the time is spent in matrix multiplication, which got me to thinking “there must be more efficient ways to do this.” I came up with three ways to optimize GPSR:

1) Single precision floating point math

Matlab by default uses double precision floating point math, so one quick and dirty solution is to convert everything to singles before calling GPSR. This gives a speed up of roughly 50%, which is fairly effective with a very negligible drop in accuracy (<0.5%) because of the lower precision.

2) Batch processing with matrices

The way I use GPSR is to find the sparse representation of one test face from a set of training faces. It is not unusual to have tens of thousands of training images, each with a size of 100-300 after PCA dimensionality reduction. Say we have 25,000 training images each with dimensionality of 100. Using GPSR, the bottleneck in finding the sparse representation of a test image involves multiplying a 25,000×100 matrix by a 100 vector many times until some convergence (I am of course leaving out some other things that happen for simplicities’ sake). By matrix multiplication properties, we can process multiple test images in batch by concatenating their vectors into a 100xN matrix. Although accomplishing the same thing, this single matrix multiply has the advantage of a single BLAS call where things can remain in L1 and L2 cache longer, giving us a much higher speedup. The problem here is some test images converge faster than others, but we can get around this by dynamically inserting and removing test image vectors into our concatenated “test images” matrix as needed. This yields about a 5X improvement with a batch size of 256, bringing us to about a 10X improvement over the original GPSR – for this particular application, of course.

3) GPU acceleration

A final step to optimizing the GPSR algorithm is to move to specialized hardware, such as graphic cards via a CUDA interface. Luckily, nVidia provides a CUDA implementation of BLAS called cuBLAS. How much speed ups could would we be likely to attain using this? Since matrix multiplication is still roughly 50% of my optimized GPSR algorithm, I took a look at matrix multiplication on various architectures.

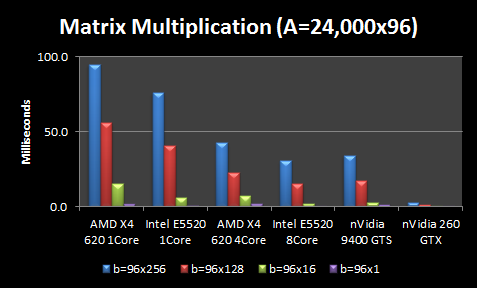

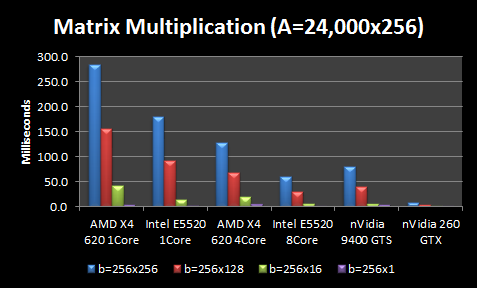

I conducted two test configurations with some simplifying assumptions. First, I assumed all matrix sizes are multiples of 16 because it seems cuBLAS seems to prefer this memory configuration. So I assume our training matrix A is 24,000×96 and test images are 96×1. I consider both without batching and with batches of test images with various sizes: 16, 128, and 256. Thus, I vary the test images matrix b from sizes ranging from 96×1 to 96×256.

All tests are averaged over 10-100 matrix multiplications with single precision matrices. I tested 4 CPU configurations and 2 GPU configurations:

- AMD X4 620 1Core ($100): Low-end 2.6 GHz Athlon quadcore system without L3 cache with only 1 core used

- AMD X4 620 2Core ($100): Low-end 2.6 GHz Athlon quadcore system without L3 cache with all 4 cores used

- Intel E5520 1 Core ($375): High-end 2.26 Xeon quadcore system with only 1 core used

- Intel E5520 2 Core($750): Two high-end 2.26 Xeon quadcores with all 8 cores used

- nVidia 9400 GT ($40): Low-end GPU with only 16 CUDA cores

- nVidia 260 GTX ($200): Middle-end GPU with 216 CUDA cores

NOTE: CPU configurations used MATLAB, which in turn usually uses CPU-optimized BLAS libraries like MKL on Intel or ACML on AMD. For a quick comparison, I benchmarked the the fast GotoBLAS against Matlab with the MKL and found GotoBLAS only 2% faster. Since this performance increase is fairly negligible, I didn’t pursue testing different BLAS packages.

NOTE: Memory transfer is not included in these comparisons because I can leave the large A matrix on the GPU unmodified as I process thousands of test images. Thus my problem is floating-point bound rather than memory bound.

I also consider the same scenario except the image size is 256 instead of 96 (so more PCA dimensions).

The CPU results are not terribly surprising. More expensive CPUs outperform less expensive CPUs, but the performance gains for more expensive CPUs are not proportional to the extra cost (i.e. 4X increase in CPU cost doesn’t give you anywhere close to a 4X performance boost). Throwing more cores at the problem helps, but again the scaling is nowhere near perfect. An extra 4 cores gives you ~2X boost and 8 cores gives you ~3X boost in performance. Larger matrices see better scaling. The interesting part is the GPUs. A $40 nVidia GPU is better than the $100 AMD CPU using all 4 cores, and performs about equivalently if you factor in the memory transfer to the GPU.

In fact, the $40 GPU is only beat by my $750 dual Intel CPUs with 8 cores, which is kind of astonishing. And the middle-end $200 nVidia GPU outperforms everything by a wide margin, averaging 5-30X better. However, at these high processing speeds, data transfer becomes the overhead. Thus, the Intel 8 Core still beats the nVidia 260 GTX if we factor in memory transfer of the large A matrix for every multiplication. However, I only have to transfer the large A matrix of ~25,000 training images to the GPU once. Thus for my application, it could still be tremendously faster to use the GPU.

Although it doesn’t show well on this graph, using matrix multiplication for b=96×1 is actually 1.5X slower than b=96×16 , leading me to believe that GPUs do have some interesting side-effects when it comes to matrix sizes, block optimizations, etc. It is good to know these intricacies because you could get significant speedups just by zero-padding matrices so the dimensions are divisible by 16.

I haven’t actually coded up the GPSR algorithm in CUDA simply because my existing 10X speedups seem to be working pretty well so far. And if I run GPSR on multiple datasets simultaneously, one on each core, I should be able to get much better parallelism than distributing each matrix multiplication over many cores. However, it is interesting to know that a $40 graphics card and some CUDA programming could outperform a brand new quad-core machine.

So thanks to some urging by a fellow PhD student, I’ve uploaded a new version of my Facebook Downloader and swapped the site over to the new WordPress version. The old site is still there so old links work, which means eventually I’ll have to do individual redirects so that people following old links get the newest content. Oh well, something for another day.

It’s that time in my PhD program where the scary issue of the “thesis” begins it’s ritualistic haunting. What is your thesis going to be about? Do you have an outline yet? Who is your committee going to consist of? Ahh! Too much! So I do what I do best under stress: take a deep breath and see what other people have done. So I downloaded the past few years of Robotics thesis proposals and dissertations. Then because I love statistics so much I ran some numbers.

The average thesis proposal (in the RI department in the last 3 years, roughly) has a length of 48 pages, with a 17-80 page range and an average of 94 references. The average thesis dissertation has 165 pages, with a 90-364 page range and 89 references. The confusing thing here is thesis proposals have more references than dissertations. I’m not sure how that works except maybe students over cite during the proposal stage to make it look like they did a good job at their background/related works sections.

Anyhow, I know roughly the structure of a thesis proposal/dissertation – now if only I had some idea what to actually put IN my thesis…

Operating rooms are scary places at the best of times, and when going under the knife, you’d like to know that a surgeon’s itchy nose or her morning’s double espresso isn’t going to cause any accidents. This is where medical robotics can ride in to the rescue. As a PhD student developing intelligent surgical instruments, I design algorithms that aid surgeons in procedures. One of the fundamental problems of helping someone is knowing what they are trying to do. Handing a hammer to a construction worker when he is pouring concrete is not terribly helpful.

As in case of convex mirrors for savety vision when driving, here a popular solution is to use computer vision: attach some cameras to the microscope, see what the surgeon is doing, figure out what her goals are, and then provide assistance. For instance, microinjections of veins smaller than a human hair are very useful procedures that are currently too difficult to perform reliably in the operating room. Our tool reduces surgeon tremor and uses image analysis at high magnification to guide the needle into the vein, increasing the success rate of the procedure. Unfortunately, many of the useful algorithms are difficult to perform in real-time. Furthermore, different algorithms are often required in parallel: several methods might track the tip of the instrument, another builds 3D representations of the tissue surface, and still others run analysis to detect and diagnose blood leakages or diseased areas.

Currently, I run a number of algorithms and am forced to make compromises even with powerful quadcore machines. With modest stereo 800×600 resolution cameras that run at 30 Hz, 5 gigabytes of images need to be processed every minute. This increases to upwards of 40 GB/min with high speed or high-definition cameras. Trying to analyze the sheer number of pixels coming is much like the analogy of trying to drink from a fire hose. Simply encoding and saving the video in real-time for post-op review becomes challenging. Consequently, I run tracking algorithms at lower resolutions, sacrificing precision for speed. Diagnoses are performed pre-operatively or manually on demand during pauses in the procedure. 3D representations of the tissue are built initially and updated only infrequently. This affects the level of assistance the system provides to the surgeon.

In fact, significant amounts of my time go towards optimizing C++ or even assembly level code to maximize performance. Reducing L1 cache misses, utilizing branch predictions, and rewriting code to take advantage of SIMD instructions let me run more algorithms and provide better aid to the surgeon. Even with such optimizations, I am still hitting the limits of what four cores can do. However, a most encouraging aspect of computer vision is its often embarrassingly parallelizable nature. With a 48 core machine, I could do a significantly better job. I would move to higher definition video for much greater precision, parallelize my tracking algorithms for enhanced speed, run advanced stereo algorithms for high quality 3D reconstructions, and thus more effectively provide the surgeon with aids that make the operating room a less scary place.

So this is twice that I have been upstaged by my lack of Google skills. I have some small background in compilers, languages close to the processor, and optimization. So when I see something obviously lacking in even rudimentary speed-ups, I think about taking a look and doing a better job myself. Usually I restrain myself otherwise I’d spend all my time re-writing Matlab to support NOT ridiculously slow for loops (please please please Mathworks, can’t you hire a decent compilers person or two and at least get your for loops to run faster than QBasic?). But sometimes, when running batch processes takes days, I start getting involved.

My first attempt was to make some Chi-Squared distance metric Matlab scripts run faster – a friend needed to run this over gigabytes of data, which was going to take months using his simple Matlab script. Months is bad so I sat down with him and we optimized the Matlab scripts, then moved to C with a mex interface, then delved into assembly language, and then into the SSE2 optimizations. In the end we had something that was an order of a magnitude faster, so it would only take several days to run. Of course, a few days later I found a guy at Google who had done the exact same thing. And oh look now that I’m trying to find a link to his website, I’ve found somebody else with the similar code with Matlab wrappers. Sigh…that was a wasted night and a half. Coulda gone to sleep at a decent hour instead of going home at 7 am after working all night.

And the story doesn’t end there! When running some of my algorithms, I was seeing rather slow JPEG decoding speeds so I went out searching for faster implementations. I found the libjpeg-SIMD version, but it was only 32-bit and since it was coded in assembly language, porting it to 64-bit would be non-trivial (I only use 64-bit for my research work so I can access more than 3 gb of RAM). I figured somebody must have done it somewhere on the Internet, but nope, the I couldn’t find anything. I even spent an extra day just researching this to prevent the same thing that happened with the Chi-Squared code from biting me once again. The closest I could find was some discussion on tightvnc-devel about attempting to port it to 64-bit, but it didn’t seem like anything came out of it (as Internet discussions have a tendency to do, the threads degenerated into open-source vs. Microsoft and cygwin vs. Mingw). So I spent a good 4-5 nights porting the key bits of libjpeg SIMD to 64-bit one frightful file at a time, as detailed in a previous blog post. And of course it turns out I again duplicated somebody else’s work. One of the developers of TightVNC posted a comment to my blog entry announcing the completion of the port informing me that they had indeed successfully ported libjeg SIMD to 64-bit a while back and it was in their SVN. Sigh…on one hand that’s really discouraging because well that is a good 25 or so hours I could have spent doing better things (like sleeping!), but on the other hand I did get a brush up on assembly language and especially some of the new 64-bit assembly language extensions.

So moral of the story is search hard and long so you don’t have to re-invent the wheel.

As a computer vision/controls grad student at CMU, I often work with very large image/video datasets. My latest project involves a terabyte or so of image data – all compressed in JPEG format to save space. I usually analyze the data in batches and am interested in maximum speed for minimum cpu usage. Recent 1 TB harddrives have linear read speeds of 50-100 MB/s. With a quad-core or higher processor, often the bottleneck is not the CPU. This is especially true if you want to do simple image processing (image resizing, corrections, converting, face detection, etc) that can sustain significant throughput. The bottleneck is often decoding the data.

JPEG Decoders

In my case, I want the fastest JPEG decoder that could take a JPEG image in memory and decompress it into a RGB pixel array. I didn’t find much in the way of JPEG decoder benchmarks so I decided to evaluate some of the more promising ones I found. Here is the roundup:

- Mini JPEG Decoder: A simple C++ port of NanoJPEG that is one single file header and very easy to use.

- STBI JPEG Decompression: Another simple one C file JPEG decompression algorithm.

- Libjpeg: Probably the gold standard for JPEG encoding/decoding, but developed quite a while ago (1997) and not entirely trival to use. Libjpeg has the additional annoyance that the original source code doesn’t support decoding from memory, so I used the TerraLib wrapper class and their libjpeg extension that adds support reading/writing JPEGs directly to and from a buffer in memory.

- Matlab (commercial): While not a JPEG decoder, it does contain one and is a widely-used package for researchers, so it is useful to compare against.

- Intel IPP (commercial): Perhaps one of the most well known “optimized” libraries for the Intel platforms. It contains a UIC JPEG decoder that is highly optimized for most varieties of Intel CPUs (it has separate code that is specifically optimized for each type of Intel CPU).

- Libjpeg SIMD (page in Japanese): A port of the popular libjpeg to use modern SSE processor instructions by optimizing the core routines in assembly language. The interface is supposed to be compatible with libjpeg. Currently only in 32-bit. I used the same TerraLib libraries for using memory buffers with libjpeg as before.

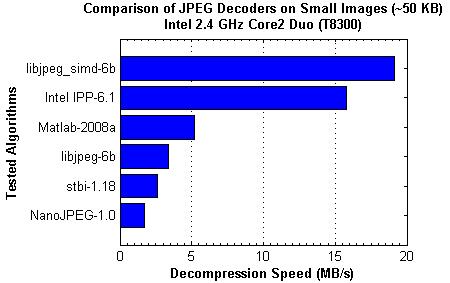

Comparison of JPEG Decoding Speeds

To compare these 6 JPEG decoders, I devised an experiment: Load ~2,000 small images (each image was ~50 KB in size for ~100 MB of compressed JPEG data) and decode them. The compression was 102 MB compressed expanded out to 1526 MB uncompressed, or a ratio of 6.7%. That’s pretty good compression there. The whole 100 MB was loaded into memory to avoid harddrive slowdowns (4 GB RAM with no paging file) and each algorithm was run 3 or more times to get an average. The JPEG data was directly decoded from memory except in the case of Matlab, where the JPEG files were read off a virtual harddrive backed by memory using ImDisk Virtual Disk Driver. So all results should very comparable. Below is a graph detailing the decompression speeds in MB/s on my Intel 2.4 GHz Core2 Duo laptop. I compiled everything with Visual Studio 2008 Professional with all optimizations on, including SSE2 instructions in hopes that the compiler could optimize the plain C or C++ code.

The results actually surprised me in some ways. Being focused on simplicity, STBI and NanoJPEG’s poor performance was to be expected. Also, Matlab does try to make the core routines fairly fast. However, I know Matlab uses the Intel MKL math libraries, so it surprised me that Matlab wasn’t using the Intel IPP libraries for faster JPEG decompression. Of course, I was using Matlab 2008 so perhaps newer versions have added this feature. What surprised me the most was that the libjpeg SIMD outperformed the Intel IPP. Intel has put a lot of effort into providing libraries that squeeze the most out of their processors, so it was a definite shock to see them outperformed. The results were fairly encouraging overall, especially with libjpeg SIMD performing over 3X faster than Matlab and over 4X better than the gold standard libjpeg. It is unfortunate it is only 32-bit.

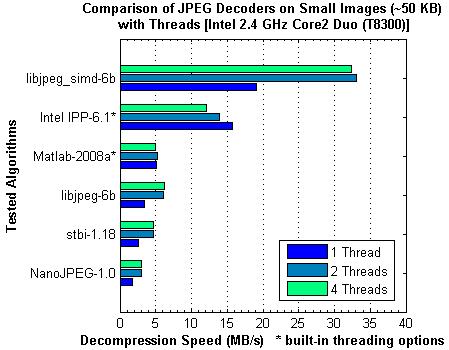

Speeding Up JPEG Decompression on Multi-Core Systems

Given that the top performer’s speed in JPEG decoding is still ~2-5 X slower than what current harddrives can pump out, it makes sense on multi-core/processor systems to use multiple threads to parallelize the decoding. To test how each algorithm scales with multiple threads, I tried the same test except splitting the ~2,000 images over 1, 2, or 4 threads for decoding. I tested on a 2 core system, so realistically I should not see any improvement with the 4 thread decoding. Matlab and the Intel IPP both had built-in options to use multiple cores when performing operations so I used these options instead of splitting the files off into different threads. The results of these tests are shown below

The results in general make sense: 2 threads is roughly twice as good as using 1 thread, but using 4 threads doesn’t help and in some cases hurts performance. The two big trend-breakers were Matlab and the Intel IPP. It seems Matlab has not added any multi-threading capabilities to its JPEG decoding routines since the performance difference was negligible. However, the Intel IPP performance gets worse the more threads you add! This doesn’t make sense at all, unless you take a look under the hood. While I am splitting my 2,000 files over multiple threads (say 1,000 images for Thread 1 to decode and 1,000 for Thread 2) for the rest of the algorithms, the Intel IPP package is trying to use multiple threads to decode each image (so part of one image gets decoded by Thread 1 and the other part by Thread 2). This works well if you need to load big images, but for small images, the overhead of creating and destroying threads for each image is so much that it not only counteracts the gains but causes worse performance as additional threads are used. While I didn’t test running the Intel IPP in the same manner I did the rest of the algorithms, I suspect the results would improve: 2 threads would nearly double performance, and moving from 2 to 4 threads would have very little effect at all.

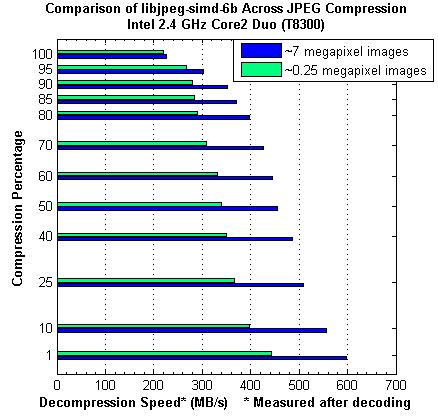

Effects of JPEG Compression Level and Image Resolution

Finally, I was curious about how the JPEG compression level (usually specified 1-100%) and the image resolution effected the decompression speed. This is important if you are looking at the storage vs. decompression speed trade off. Let’s say you have 800 MB of uncompressed images (BMP, PPM, RAW, etc) and want to save them for maximum decompression speed later. What compression is best? What size should you store them at? To analyze this, I took typical large images from my 7 megapixel camera and the smaller, web-sized images at 1/4 megapixels and compared the decompression speed as I varied the JPEG compression level. NOTE: I have changed how I measure decompression speed here from MB/s compressed JPEG to MB/s uncompressed JPEG. In another words, I am measuring how fast I can decode the 800 MB since the compressed JPEGs change size as I change the compression ratio. For these experiments, I only used the best performing JPEG decoder, libjpeg SIMD.

At first, the results caught me off guard. I was expecting lower compression percentages to take longer to decode as more work was being done for the compression. However, I suppose when you think about it, the best possible compression would be to reduce each image into one pixel with the average RGB – and to decompress that you just fill all the pixels in the image with your average RGB which would be really fast. So higher compression percentages don’t really gain you any speed increases, which is interesting. Also, it is interesting to see that the larger images decode much faster than smaller images, which is probably due to caching of images and reduction of setting up the JPEG decoder for each image (reading headers, allocating/deallocating, etc, etc).

Conclusion

From these results, we can conclude that libraries optimized for modern processors are several times faster than their simple C/C++ counterparts. Also, JPEG decoding generally scales pretty well with the number of threads as long as each image is independent of the other. Trying to use multiple threads to decode a single image is counterproductive for small JPEG images, and only somewhat useful for larger images (I tested the Intel IPP with the 7-megapixel images and found that 2 threads is only ~1.3X faster than using 1 thread compared to ~1.7 faster for using threads on separate images. When choosing a compression setting, lower is better for both conserving storage space and compression speed). Also, decompressing smaller pictures is noticeably slower than decompressing larger pictures.

So, let’s talk about the take home message. What is the best JPEG decoder for you? It depends on your needs:

- Simple, easy, leisurely decompression: NanoJPEG or STBI. I find NanoJPEG easier, just include one *.h file and you are done. It’s got a nice little class and everything.

- Fastest possible decoding of large images: Intel IPP is your friend because you can use multiple threads per image.

- Fast decoding of images, only need 32-bit: libjpeg SIMD is really amazing, but it currently is only 32-bit and takes some work to compile (the website does have precompiled binaries that I haven’t tried). For instructions on compilation, check out the post by sfranzyshen (the exact instructions are a bit down on the page, search for SIMD).

- Fairly fast, stable, 64-bit decoding: libjpeg is the gold standard and is fairly good for what it does.

So there you have it, a brief analysis of JPEG decoding. Go out and enjoy some JPEG decompression goodness!

So my first journal paper was written in 13 days. My advisor originally wanted it to be written in a single day but I certainly do not posses such superpowers – not in the least. The reason for the massive rush was my advisor promised a journal paper in the grant proposal by a certain timeframe (which has long since passed). Long story short, I constructed a journal paper from the proposal and an earlier conference paper in record time. Oh and learned how to calculate p-values (ttest2 in Matlab). Anyhow, I did a final proof-read, corrected some errors and sent the last draft off to my advisor at 6 AM. I woke up at 9:30 AM, had a bunch of corrections to make, and we had a nice back and forth until lunchtime. After my advisor submitted the paper, I went back to sleep around 2, thinking I would get a good solid couple of hours nap time. Alas this was not to be as my advisor called me 30 minutes later to schedule a meeting discussing what I would be working on next. Sigh…

One of the most annoying things was the format of this paper. They required Word and not just that, but they insisted on Word 1997 format with figures in TIFF and tables on separate pages. Annoying to say the least. Unfortunately for me, I had written the paper in Word 2007 with the new fancy equation editor they introduced. Saving the document in the old format converted all my beautiful equations into terribly rendered picture representations of my equations. It made me want to cry. I had exactly 100 equations in my paper (and no I didn’t aim for that number) so I didn’t want to retype them. So I resorted to some VBA trickery. First I increased the font of the Word 2007 document by 5-10X. Then I saved to the old format and my giant font equations got saved as giant graphics. Then I wrote a VBA script to go through the document and resize all my equation graphics to get high DPI equations. This approach met with limited success. I was successful, but the side effects were terrible. First, the vertical alignment was way off so I had to wind up cropping the graphics to pad the bottom of the equation so that it aligned with the rest of the sentence. I was all happy that this worked until I realized that this totally messed up the print to PDF function, so I decided to convert all the files from PDF format using a sodapdf software fo this. Cropping the equations even in the slightest caused all the equations to come out with black backgrounds. Gar! Foiled! Finally, I decided I’d re-write them all by hand in the old version of MathType so they would be compatible with the old Word format. But to my surprise, I discovered the new MathType library has a function to automatically convert Word 2007 Equations to old Equation 3.0 style equations. Viola! It worked quite well although it made a few mistakes and mangled some of my paragraph formatting. But it was way better to watch it work for several minutes scrolling through my document and converting the equations by hand!

After only getting 3 hours of sleep yesterday due to me creating the next homework for computer vision and an early morning meeting with my advisor, I learned that all the testing has to be done this week for the paper abstract deadline next week. Oh joy joy. So instead of going home to sleep off the afternoon, I had to do horror of horrors…actual work. However, it was SOOO cold down in the dungeon. Usually it is quite chilly and I’ll wear a light sweater but it was just completely rediculous today. Somebody must have left the AC on manual override or maybe just sucking in 40F air from houtside. Anyhow, there is a giant vent right up and to the left of my workstation. And with the surgeon coming in the following morning, I was going to be working most of the night. So I decided to do something about this infernally powerful AC draft. After several ideas, I came up with a box wedge solution. The only problem? What do I wedge the box against to block the vent? Turns out the solution is to construct a giant stack of boxes. And it worked! Oh the flush of warm success cascaded down on me like the harsh crushing clank of a dropped needle.